OutWit Hub’s New Features

Important Note: The tutorials you will find on this blog may become outdated with new versions of the program. We have now added a series of built-in tutorials in the application which are accessible from the Help menu.

You should run these to discover the Hub.

The newest update of the Hub contains some exciting new features. This tutorial will explain these new functionalities but for a more detailed explanation of how to use the Hub’s basic features please, refer to the existing list of tutorials.

Let’s get started….

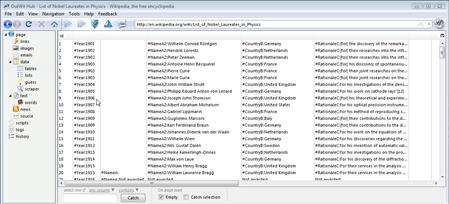

Open the following Wikipedia page which displays a list of all the Nobel Laureates in Physics:

http://en.wikipedia.org/wiki/List_of_Nobel_Laureates_in_Physics

As you can see this page contains a table of the Nobel Laureates’ name, country, year, and rationale. We need selected information from this page and can use OutWit Hub to grab the relevant data and export it into a spreadsheet.

To begin extracting data, open the Table view.

Below the results, there is a selection panel that allows you to enter specific criteria.

The different types of cheddar are now highlighted and we can use the

button to move the selection into our Catch or right-click to ‘Export Selection As….’

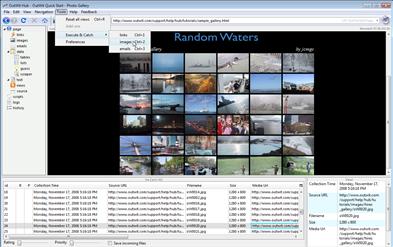

Finally, the last new feature we’ll cover today is ‘Execute and Catch’.

You will find this feature interesting for links, images and emails:

As you browse, if you select ‘Execute and Catch’ in the ‘Tools’ menu and then ‘Images’, you will catch all the photos on this page without having to leave the Page view.

This feature is helpful when you come across useful information that you’d like to keep while browsing through many pages.

This concludes the New Features tutorial. As always we welcome any suggestions, comments or feedback. We couldn’t do this without you.

by jcc

Tags: Execute and Catch, Select Different, Select Identical, Select Inversion, Select Similar

December 9th, 2008 at 1:26 pm

Hi,

Is it possible to extend the scraper’s fields, it only has 15, but there are many more columns to be filled, say you want to scrape a technical details of a product that has more than 15 unique tag fields to it?

How do I catch images with the scraper as it will copy the image location, or image name, but I am not able to synchronize it to have him catch the relevant image linked to the record.

Say I am scraping a list of companies, name address, email and so forth, but I want to catch the logo as well, is there a way of having the logo stored as an image and linked to the specific record.

So far I have to scrape the image name, independently catch the images, load everything into the same folder, indicate the path to that folder and hope every image name is unique, which is error prone.

That’s in theory, because in practice I haven’t had the time to test this yet.

Any other technique?

PS: This product is fantastic and if there is an Internet Award for best product of the year it should get it. However I am not to incline to publicize it too much, because it would make competition even more competitive!! (wink!)

cocobeach

December 9th, 2008 at 5:30 pm

Thank you for the compliment! For the moment, the limit for the tag fields is 15 and you cannot catch the images. We are working on both and future updates will include those functionalities. For the moment I do not have a release date but it is on our lists of things to do. The way you suggested is the best option for the moment. Have you tried OutWit Images yet? It makes catching and saving images much easier.

January 2nd, 2009 at 1:46 am

Great app. I’ve been thinking about writing something similar in Perl. I would like to develop more outfits for you. One problem I had with a site where I had a large page of ebooks I had paid for and had to download: 90% of the files downloaded incomplete. It was a major bummer and I had to go back and click on them all by hand and download manually. Until then I was quite impressed with Outwit. I’m wondering if it was a bug, or something done by the site itself to prevent automated downloading? Does it register as a spider or what is the user agent? What language is it written in? How can I help? I have some ideas for helpful and commercial applications.

Cheers

M

January 4th, 2009 at 7:21 pm

Is there a way to automate Outwit? As in if I had a list of web addresses from an excel sheet and set up the scraper, is there a way to give that list to Outwit and have it autonavigate said list and catch data set up in the scraper?

January 8th, 2009 at 1:01 pm

For the moment, it is not possible, however, we will be releasing a future update that will have this functionality.

February 10th, 2009 at 7:12 am

I would like to take a small piece of space here and drop a huge Thank you.

I’ve spend a weekend to try to configure different apps that will charge you a quite reasonable amount with no successful results for me and quite frustrating as well.

I had to go through your tutorials and I was ready to go. Thank you.

I was able to paste my own list of URLs in Excel add the anchor tags and save it as HTML. I’ve opened the file with OutWit select all urls in the links widget, r click and select Browse Through… and then the magic happens

You guys rock thank you,

C

February 10th, 2009 at 7:52 pm

Thank you so much for the compliment! You could also use Text Editor to save it as an HTML file. Thank you so much for the useful suggestion! We always appreciate and encourage any feedback or suggestions from our users.

March 12th, 2009 at 3:06 pm

Your tool is simply great!

Have you planned to add a feature similar to the URL generator in Happy Harvester?

That way it would be perfect.

March 12th, 2009 at 4:45 pm

Thank you very much for the compliment. I looked at the feature and it seems like it could be very interesting. I have given your suggestion to our developers to be considered for future updates.

July 16th, 2009 at 11:10 am

How do you clean the catch? After I do a catch and then export to excel, there should be an easy way to remove all the info and do a new catch. maybe I am just missing something.

July 16th, 2009 at 11:16 am

@Doug: Simply select all (with Ctrl + A, or Cmd + A), and then push the “Delete” key.

But we could make a shortcut for it (as in outwit-images), this is a good suggestion.

Thanks.